The HBM memory shortage is the real reason your favorite AI models keep getting delayed. Earlier in the year, companies like OpenAI and Google pushed back their biggest releases and many of them blamed it on a lack of memory chips.

However, the real problem is the shortage of a special type of memory called High Bandwidth Memory (HBM). Without it, the fastest AI processor becomes useless and right now, there is not enough to go around.

What HBM Does

First, what does HBM do?

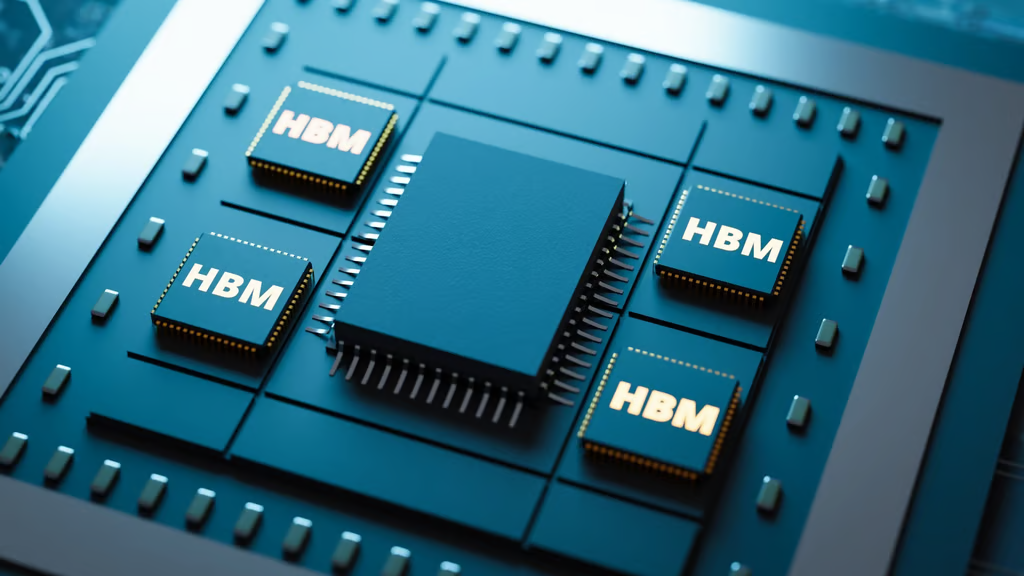

An AI chip needs data to process. The faster it gets that data, the faster it works. HBM is a type of memory that sits very close to the processor. It moves data extremely quickly. In contrast, ordinary memory is slower and located farther away.

As a result, an AI chip with HBM runs much faster than one without. Because of this, every AI company wants as much HBM as possible. But the shortage means AI chips are being made slower and your chatbot keeps getting delayed.

Why HBM Manufacturing Has Low Yields

Another thing to consider is how HBM is manufactured. While ordinary memory chips are laid flat, HBM is stacked vertically with one layer on top of another. Once the stack is built, manufacturers drill thousands of microscopic holes through all the layers. These holes carry electricity and data between layers.

After that, the whole stack is pressed with high heat and pressure. Too much heat warps the chips. Too little and the layers do not stick properly. Also, the holes must line up perfectly. If even one hole is slightly misaligned, the entire stack is destroyed.

As a result, more than half of what factories try to make gets thrown away. Therefore, even though production is constant, it is impossible to produce enough to meet the demand.

The HMB Memory Shortage Hits A Three-Company Bottleneck

Unfortunately, only three companies on Earth currently make HBM because it requires years of specialized knowledge and billions in equipment.

In addition, each HBM stack is custom made for specific AI chips. It is impossible to just wake up and decide to order HBM. Companies like Nvidia must place orders years in advance.

Therefore, when one company needs more HBM, others cannot shift production overnight. At the same time, all major AI chip makers fight over the same tiny supply.

In April 2026, SK Hynix cut shipments to Nvidia by 30 percent. As a result, fewer AI chips will be made and fewer models can be trained.

How the HBM Memory Shortage Slows Down AI Tools

No HBM means no finished AI chips. No chips means OpenAI and Google cannot train bigger models. This issue affects users directly because the current AI models will start to get slower.

However, there are other bigger problems. The AI forgets what the user mentioned minutes ago. Also, features like video generation keep getting pushed to “next year.”

In addition, companies may raise prices or limit free access. Because HBM is so scarce, the hardware underneath AI services has become costlier.

When Will the Delays Finally End?

Even though companies are building new HBM factories, it takes time. SK Hynix is spending billions on a plant in Indiana, but it will not open until late 2028. Samsung and Micron face similar timelines.

Because of this, meaningful relief is not expected until 2027 or 2028. However, there is some hope. Better stacking technology could improve yields. But experts agree that 2026 and most of 2027 will remain extremely tight.

Ultimately, the delay may take years and improvements remain uncertain.