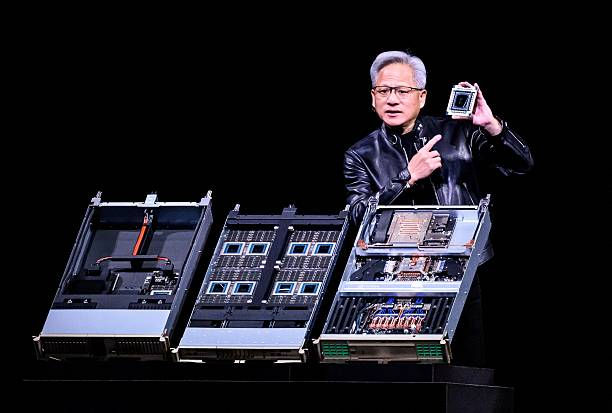

At Nvidia’s annual GTC developer conference in San Jose, California, CEO Jensen Huang made a declaration that a modern data center should be viewed as a factory that produces tokens and not just a warehouse for compute and storage.

Nvidia now describes its AI infrastructure stack as an “AI factory” built to “manufacture intelligence at scale,” with token generation presented as the core output of the system.

With this, Huang formally repositioned Nvidia’s identity around a new concept being an AI factory built to continuously generate AI outputs measured in tokens, as opposed to only storing and retrieving data.

What Huang Means

According to Huang’s argument, tokens are the basic unit of AI output, and inference is the work that turns compute into those tokens.

Huang described a fundamental shift in how data centers should be understood, arguing that traditional data centers will be reimagined as token-producing AI factories. Traditional data centers were built to store and retrieve data, whereas AI factories are designed to produce intelligence in the form of tokens.

Tokens, which are the units of text, images, or audio that AI models generate, are now the measurable output by which Nvidia wants operators, investors, and enterprise buyers to evaluate their infrastructure. “This is your token factory, this is your AI factory, this is your revenue,

Huang had said on stage. “Our cost per token is the lowest in the world.”

Instead of treating a data center as a place that simply hosts digital services, Huang is now describing it as an industrial system with inputs, production, and output. This framing and language used by Huang matters, as it may eventually cause a change in how the industry talks about AI infrastructure.

Huang also goes ahead to add that “Our [Nvidia] cost per token is the lowest in the world.”

Huang has argued that as AI systems move from chatbots to reasoning models and agents, they use more computing power and drive more token consumption. That makes the economics of inference more important because the cost and efficiency of producing tokens becomes a central business concern.

Nvidia Business Case

The rebranding also supports Nvidia’s commercial strategy. By describing data centers as AI factories, Huang places Nvidia’s chips, networking, software, and reference designs at the center of the AI buildout. That helps position the company not just as a GPU supplier, but as the architect of the whole production system.

Nvidia says its AI factory approach combines accelerated infrastructure and AI software into one stack, with the aim of improving deployment speed, security, and return on investment.

What This Means for Businesses

For companies buying AI infrastructure, the shift in language serves as a different way of measuring value. Instead of judging a data center mainly by storage capacity or server counts, Huang’s model pushes buyers to focus on output, efficiency, and the cost of each token produced.

That matters because AI budgets are getting expensive as each day goes by. Huang has said tokens will become a regular line item in corporate spending, similar to laptops or software subscriptions. In other words, the token-factory concept is a suggestion to AI-focused businesses for tracking AI usage as a measurable business output.